Feature Store

Subscribe to our newsletter

📬 Receive new lessons straight to your inbox (once a month) and join 40K+ developers in learning how to responsibly deliver value with ML.

What is a feature store

Let's motivate the need for a feature store by chronologically looking at what challenges developers face in their current workflows. Suppose we had a task where we needed to predict something for an entity (ex. user) using their features.

- Duplication: feature development in isolation (for each unique ML application) can lead to duplication of efforts (setting up ingestion pipelines, feature engineering, etc.).

Solution: create a central feature repository where the entire team contributes maintained features that anyone can use for any application.

- Skew: we may have different pipelines for generating features for training and serving which can introduce skew through the subtle differences.

Solution: create features using a unified pipeline and store them in a central location that the training and serving pipelines pull from.

- Values: once we set up our data pipelines, we need to ensure that our input feature values are up-to-date so we aren't working with stale data, while maintaining point-in-time correctness so we don't introduce data leaks.

Solution: retrieve input features for the respective outcomes by pulling what's available when a prediction would be made.

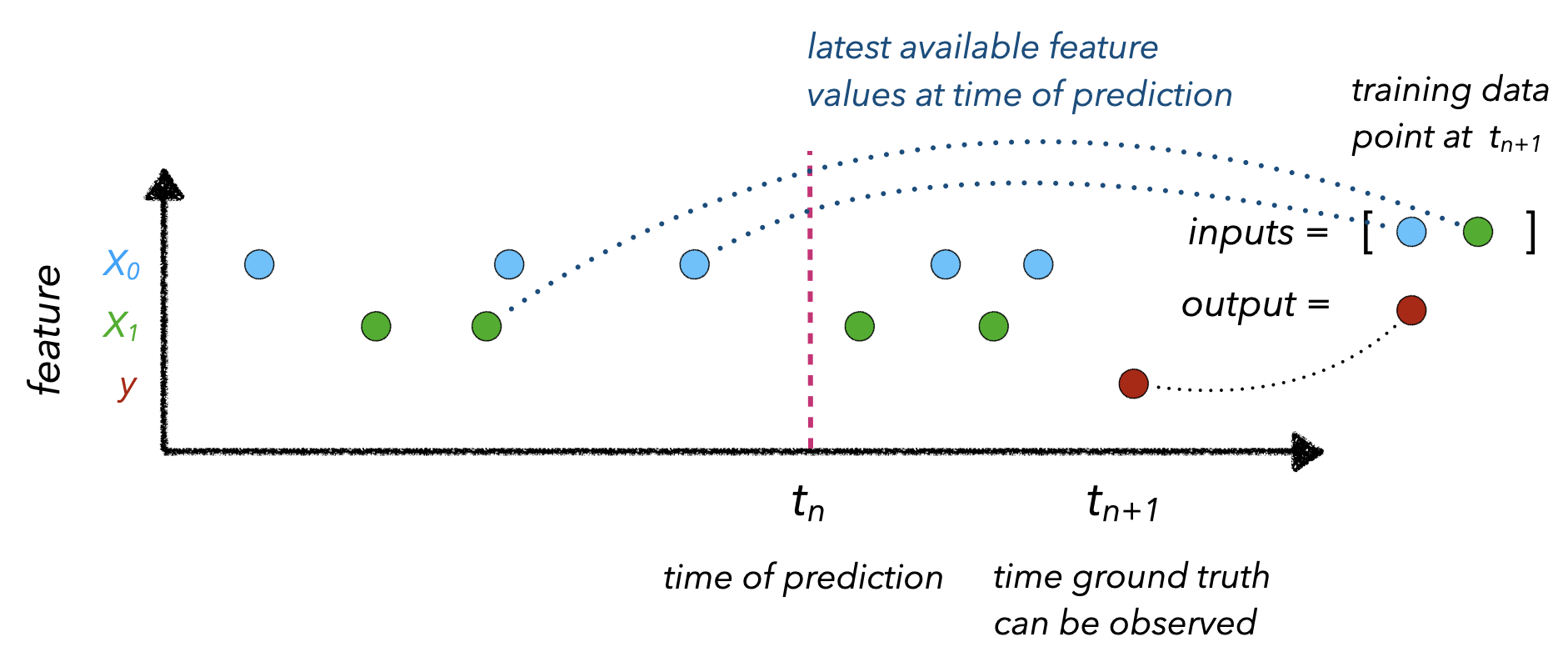

Point-in-time correctness refers to mapping the appropriately up-to-date input feature values to an observed outcome at \(t_{n+1}\). This involves knowing the time (\(t_n\)) that a prediction is needed so we can collect feature values (\(X\)) at that time.

When actually constructing our feature store, there are several core components we need to have to address these challenges:

- data ingestion: ability to ingest data from various sources (databases, data warehouse, etc.) and keep them updated.

- feature definitions: ability to define entities and corresponding features

- historical features: ability to retrieve historical features to use for training.

- online features: ability to retrieve features from a low latency origin for inference.

Each of these components is fairly easy to set up but connecting them all together requires a managed service, SDK layer for interactions, etc. Instead of building from scratch, it's best to leverage one of the production-ready, feature store options such as Feast, Hopsworks, Tecton, Rasgo, etc. And of course, the large cloud providers have their own feature store options as well (Amazon's SageMaker Feature Store, Google's Vertex AI, etc.)

Tip

We highly recommend that you explore this lesson after completing the previous lessons since the topics (and code) are iteratively developed. We did, however, create the feature-store repository for a quick overview with an interactive notebook.

Over-engineering

Not all machine learning platforms require a feature store. In fact, our use case is a perfect example of a task that does not benefit from a feature store. All of our data points are independent, stateless, from client-side and there is no entity that has changing features over time. The real utility of a feature store shines when we need to have up-to-date features for an entity that we continually generate predictions for. For example, a user's behavior (clicks, purchases, etc.) on an e-commerce platform or the deliveries a food runner recently made in the last hour, etc.

When do I need a feature store?

To answer this question, let's revisit the main challenges that a feature store addresses:

- Duplication: if we don't have too many ML applications/models, we don't really need to add the additional complexity of a feature store to manage transformations. All the feature transformations can be done directly inside the model processing or as a separate function. We could even organize these transformations in a separate central repository for other team members to use. But this quickly becomes difficult to use because developers still need to know which transformations to invoke and which are compatible with their specific models, etc.

Note

Additionally, if the transformations are compute intensive, then they'll incur a lot of costs by running on duplicate datasets across different applications (as opposed to having a central location with upt-o-date transformed features).

-

Skew: similar to duplication of efforts, if our transformations can be tied to the model or as a standalone function, then we can just reuse the same pipelines to produce the feature values for training and serving. But this becomes complex and compute intensive as the number of applications, features and transformations grow.

-

Value: if we aren't working with features that need to be computed server-side (batch or streaming), then we don't have to worry about concepts like point-in-time, etc. However, if we are, a feature store can allow us to retrieve the appropriate feature values across all data sources without the developer having to worry about using disparate tools for different sources (batch, streaming, etc.)

Feast

We're going to leverage Feast as the feature store for our application for it's ease of local setup, SDK for training/serving, etc.

# Install Feast and dependencies

pip install feast==0.10.5 PyYAML==5.3.1 -q

👉 Follow along interactive notebook in the feature-store repository as we implement the concepts below.

Set up

We're going to create a feature repository at the root of our project. Feast will create a configuration file for us and we're going to add an additional features.py file to define our features.

Traditionally, the feature repository would be it's own isolated repository that other services will use to read/write features from.

mkdir -p stores/feature

mkdir -p data

feast init --minimal --template local features

cd features

touch features.py

Creating a new Feast repository in /content/features.

The initialized feature repository (with the additional file we've added) will include:

features/

├── feature_store.yaml - configuration

└── features.py - feature definitions

We're going to configure the locations for our registry and online store (SQLite) in our feature_store.yaml file.

- registry: contains information about our feature repository, such as data sources, feature views, etc. Since it's in a DB, instead of a Python file, it can very quickly be accessed in production.

- online store: DB (SQLite for local) that stores the (latest) features for defined entities to be used for online inference.

If all our feature definitions look valid, Feast will sync the metadata about Feast objects to the registry. The registry is a tiny database storing most of the same information you have in the feature repository. This step is necessary because the production feature serving infrastructure won't be able to access Python files in the feature repository at run time, but it will be able to efficiently and securely read the feature definitions from the registry.

When we run Feast locally, the offline store is effectively represented via Pandas point-in-time joins. Whereas, in production, the offline store can be something more robust like Google BigQuery, Amazon RedShift, etc.

We'll go ahead and paste this into our features/feature_store.yaml file (the notebook cell is automatically do this):

project: features

registry: ../stores/feature/registry.db

provider: local

online_store:

path: ../stores/feature/online_store.db

Data ingestion

The first step is to establish connections with our data sources (databases, data warehouse, etc.). Feast requires it's data sources to either come from a file (Parquet), data warehouse (BigQuery) or data stream (Kafka / Kinesis). We'll convert our generated features file from the DataOps pipeline (features.json) into a Parquet file, which is a column-major data format that allows fast feature retrieval and caching benefits (contrary to row-major data formats such as CSV where we have to traverse every single row to collect feature values).

1 2 | |

1 2 3 4 5 6 7 | |

1 2 | |

1 2 3 4 5 6 7 | |

Feature definitions

Now that we have our data source prepared, we can define our features for the feature store.

1 2 3 4 5 | |

The first step is to define the location of the features (FileSource in our case) and the timestamp column for each data point.

1 2 3 4 5 6 | |

Next, we need to define the main entity that each data point pertains to. In our case, each project has a unique ID with features such as text and tags.

1 2 3 4 5 6 | |

Finally, we're ready to create a FeatureView that loads specific features (features), of various value types, from a data source (input) for a specific period of time (ttl).

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 | |

So let's go ahead and define our feature views by moving this code into our features/features.py script (the notebook cell is automatically do this):

Show code

from datetime import datetime

from pathlib import Path

from feast import Entity, Feature, FeatureView, ValueType

from feast.data_source import FileSource

from google.protobuf.duration_pb2 import Duration

# Read data

START_TIME = "2020-02-17"

project_details = FileSource(

path="/content/data/features.parquet",

event_timestamp_column="created_on",

)

# Define an entity for the project

project = Entity(

name="id",

value_type=ValueType.INT64,

description="project id",

)

# Define a Feature View for each project

# Can be used for fetching historical data and online serving

project_details_view = FeatureView(

name="project_details",

entities=["id"],

ttl=Duration(

seconds=(datetime.today() - datetime.strptime(START_TIME, "%Y-%m-%d")).days * 24 * 60 * 60

),

features=[

Feature(name="text", dtype=ValueType.STRING),

Feature(name="tag", dtype=ValueType.STRING),

],

online=True,

input=project_details,

tags={},

)

Once we've defined our feature views, we can apply it to push a version controlled definition of our features to the registry for fast access. It will also configure our registry and online stores that we've defined in our feature_store.yaml.

cd features

feast apply

Registered entity id Registered feature view project_details Deploying infrastructure for project_details

Historical features

Once we've registered our feature definition, along with the data source, entity definition, etc., we can use it to fetch historical features. This is done via joins using the provided timestamps using pandas for our local setup or BigQuery, Hive, etc. as an offline DB for production.

1 2 | |

1 2 3 4 5 6 | |

1 2 3 4 5 6 7 | |

Materialize

For online inference, we want to retrieve features very quickly via our online store, as opposed to fetching them from slow joins. However, the features are not in our online store just yet, so we'll need to materialize them first.

cd features

CURRENT_TIME=$(date -u +"%Y-%m-%dT%H:%M:%S")

feast materialize-incremental $CURRENT_TIME

Materializing 1 feature views to 2022-06-23 19:16:05+00:00 into the sqlite online store. project_details from 2020-02-17 19:16:06+00:00 to 2022-06-23 19:16:05+00:00: 100%|██████████████████████████████████████████████████████████| 955/955 [00:00<00:00, 10596.97it/s]

This has moved the features for all of our projects into the online store since this was first time materializing to the online store. When we subsequently run the materialize-incremental command, Feast keeps track of previous materializations and so we'll only materialize the new data since the last attempt.

Online features

Once we've materialized the features (or directly sent to the online store in the stream scenario), we can use the online store to retrieve features.

1 2 3 4 5 6 7 | |

1 2 3 | |

Architecture

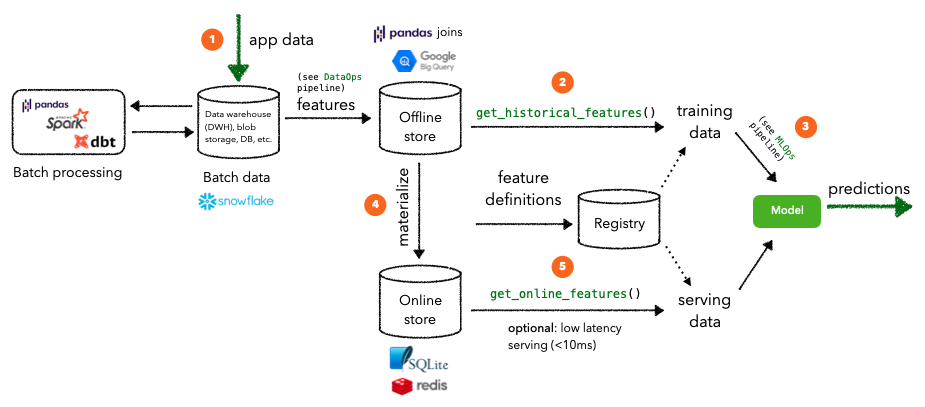

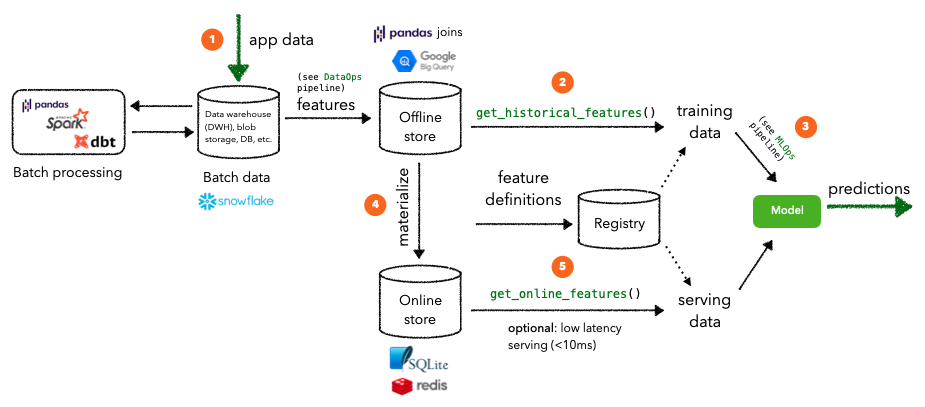

Batch processing

The feature store we implemented above assumes that our task requires batch processing. This means that inference requests on specific entity instances can use features that have been materialized from the offline store. Note that they may not be the most recent feature values for that entity.

- Application data is stored in a database and/or a data warehouse, etc. And it goes through the necessary pipelines to be prepared for downstream application (analytics, machine learning, etc.).

- These features are written to the offline store which can then be used to retrieve historical training data to train a model with. In our local set up, this was join via Pandas DataFrame joins for given timestamps and entity IDs but in a production setting, something like Google BigQuery or Hive would receive the feature requests.

- Once we have our training data, we can start the workflows to optimize, train and validate a model.

- We can incrementally materialize features to the online store so that we can retrieve an entity's feature values with low latency. In our local set up, this was join via SQLite for a given set of entities but in a production setting, something like Redis or DynamoDB would be used.

- These online features are passed on to the deployed model to generate predictions that would be used downstream.

Warning

Had our entity (projects) had features that change over time, we would materialize them to the online store incrementally. But since they don't, this would be considered over engineering but it's important to know how to leverage a feature store for entities with changing features over time.

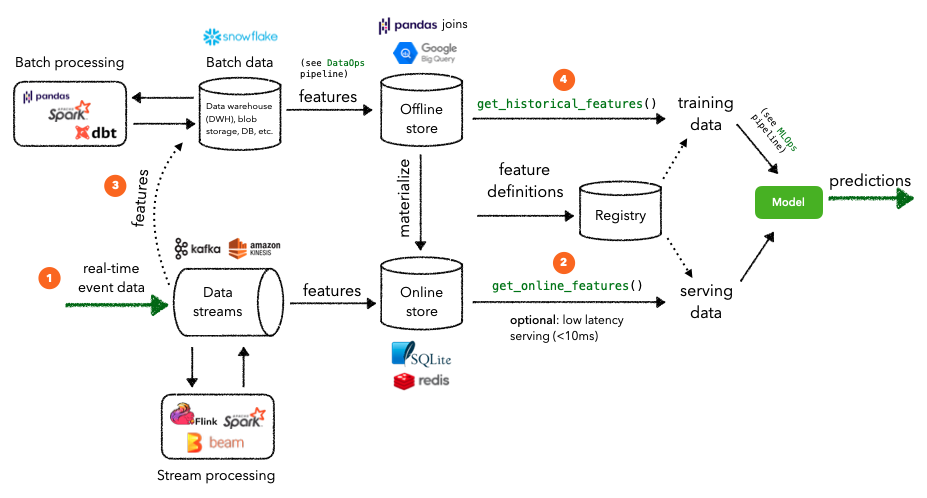

Stream processing

Some applications may require stream processing where we require near real-time feature values to deliver up-to-date predictions at low latency. While we'll still utilize an offline store for retrieving historical data, our application's real-time event data will go directly through our data streams to an online store for serving. An example where stream processing would be needed is when we want to retrieve real-time user session behavior (clicks, purchases) in an e-commerce platform so that we can recommend the appropriate items from our catalog.

- Real-time event data enters our running data streams (Kafka / Kinesis, etc.) where they can be processed to generate features.

- These features are written to the online store which can then be used to retrieve online features for serving at low latency. In our local set up, this was join via SQLite for a given set of entities but in a production setting, something like Redis or DynamoDB would be used.

- Streaming features are also written from the data stream to the batch data source (data warehouse, db, etc.) to be processed for generating training data later on.

- Historical data will be validated and used to generate features for training a model. This cadence for how often this happens depends on whether there are data annotation lags, compute constraints, etc.

There are a few more components we're not visualizing here such as the unified ingestion layer (Spark), that connects data from the varied data sources (warehouse, DB, etc.) to the offline/online stores, or low latency serving (<10 ms). We can read more about all of these in the official Feast Documentation, which also has guides to set up a feature store with Feast with AWS, GCP, etc.

Additional functionality

Additional functionality that many feature store providers are currently (or recently) trying to integrate within the feature store platform include:

- transform: ability to directly apply global preprocessing or feature engineering on top of raw data extracted from data sources.

Current solution: apply transformations as a separate Spark, Python, etc. workflow task before writing to the feature store.

- validate: ability to assert expectations and identify data drift on the feature values.

Current solution: apply data testing and monitoring as upstream workflow tasks before they are written to the feature store.

- discover: ability for anyone in our team to easily discover features that they can leverage for their application.

Current solution: add a data discovery engine, such as Amundsen, on top of our feature store to enable others to search for features.

Reproducibility

Though we could continue to version our training data with DVC whenever we release a version of the model, it might not be necessary. When we pull data from source or compute features, should they save the data itself or just the operations?

- Version the data

- This is okay if (1) the data is manageable, (2) if our team is small/early stage ML or (3) if changes to the data are infrequent.

- But what happens as data becomes larger and larger and we keep making copies of it.

- Version the operations

- We could keep snapshots of the data (separate from our projects) and provided the operations and timestamp, we can execute operations on those snapshots of the data to recreate the precise data artifact used for training. Many data systems use time-travel to achieve this efficiently.

- But eventually this also results in data storage bulk. What we need is an append-only data source where all changes are kept in a log instead of directly changing the data itself. So we can use the data system with the logs to produce versions of the data as they were without having to store separate snapshots of the the data itself.

Regardless of the choice above, feature stores are very useful here. Instead of coupling data pulls and feature compute with the time of modeling, we can separate these two processes so that features are up-to-date when we need them. And we can still achieve reproducibility via efficient point-in-time correctness, low latency snapshots, etc. This essentially creates the ability to work with any version of the dataset at any point in time.

Upcoming live cohorts

Sign up for our upcoming live cohort, where we'll provide live lessons + QA, compute (GPUs) and community to learn everything in one day.

To cite this content, please use:

1 2 3 4 5 6 | |